Undecidability (Briefly)

I want to tell you a story—with only some embellishment.

How do we count?¶

First, some context. How do we count things? Does \(\{1,2,3\}\) have the same number of elements as \(\{A, B, C\}\)? What about \(\mathbb{N}\) vs. \(\mathbb{N} \cup \{BrownU\}\)? If we're comparing infinite sets, then it seems reasonable to say that they have the same size if we can make a bijection between them: a 1-1 correspondence.

But then, counter-intuitively, \(\mathbb{N}\) and \(\mathbb{N} \cup \{BrownU\}\) are the same size. Why? The idea is encoded in a thought experiment called Hilbert's Hotel: suppose you work at the front desk of an infinite hotel. You have a guest room for every natural number. Tonight, every room is occupied. But then a new guest arrives. Can you find room for them?

Think, then click!

Yes! Here's how. For every room \(i\), tell that guest to move into room \(i+1\). You'll never run out of rooms, and room 0 will be free for the new guest. Every guest will need to do a finite amount of work, but assuming we can send this message to everyone at once, it works out.

Let's go back in time to the late 1800's. Mathematicians wanted to know: is every infinite set the same cardinality? Are all infinities one, in a philosophical sense?

In 1874, a mathematician named Georg Cantor published an article showing that not all infinites are the same size. This was groundbreaking, and extremely controversial. Mathematicians later said that his ideas came 100 years before the community was ready for them. Hilbert himself actually said (decades later) that "No one shall drive us from the paradise Cantor has created for us." A pretty ringing endorsement from one of the greatest then-living mathematicians.

But at the time, the controversy motivated him to develop an alternative proof, which he published in 1891. This is the version we usually talk about in set-theory classes today: The power set of \(\mathbb{N}\), that is, the set of subsets of \(\mathbb{N}\), is of strictly larger cardinality than \(\mathbb{N}\).

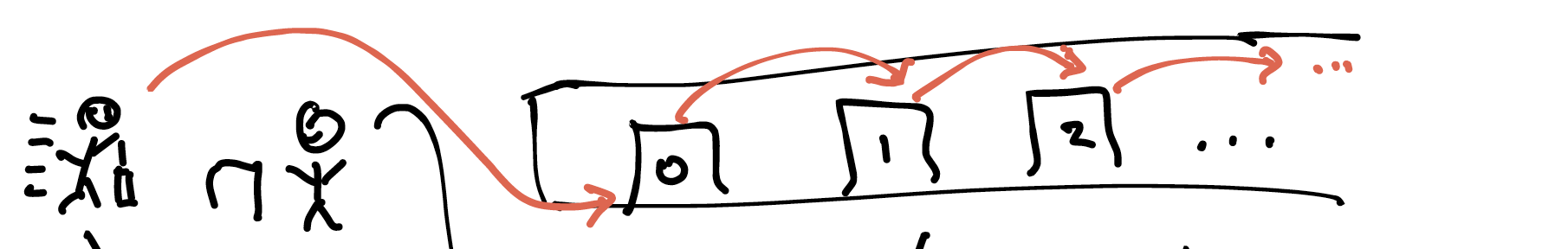

How did Cantor prove this? By contradiction. Assume you're given a bijection between a set \(\mathbb{N}\) and its power set. Now, this bijection can be thought of as an infinite table, with rows indexing subsets of \(\mathbb{N}\) and columns containing natural numbers. The inner cells say whether or not that set (i.e., row index) contains a given natural number (i.e., column label). If the two can be put into correspondence, then it should be possible to place every possible subset as a row in the table.

| Index | 0 | 1 | 2 | ... |

|---|---|---|---|---|

| 0 | 0 | 0 | 0 | ... |

| 1 | 1 | 0 | 0 | ... |

| 2 | 1 | 1 | 0 | ... |

| 3 | 1 | 1 | 1 | ... |

| ... | ... | ... | ... | ... |

Cantor showed that there must always be a subset of \(\mathbb{N}\) that isn't represented as a row in the table. That is, such a bijection cannot exist. Even with the very permissive definition of "same size" we use for infinite sets, there are still more subsets of the natural numbers than there are natural numbers. So: what is the subset that can't be represented as a row in the table?

Think, then click!

Read off the diagonal from the top-left onward, and flip each bit. In the table above: row 0, column 0 is 0, so the diagonal set contains 0. Row 1, column 1 is 0, so the diagonal set contains 1. Row 2, column 2 is 0, so it contains 2. Row 3, column 3 is 1, so it does not contain 3. And so on.

This technique is called "Cantor diagonalization".

How many programs are there?¶

Why does this matter to US? Let me ask you two questions:

QUESTION 1: How many syntactically-valid Java program source files are there?

Think, then click!

There are infinitely many. But let's be more precise. The source code of any program is a finite text file. The size may be unbounded, but that's not the same as infinite. And the alphabet used for each character is also finite (let's say between 0 and 255, although that isn't always entirely accurate).

Thus, we can think of a program source file as a finite sequence of numbers between 0 and 255. We can encode a Java program's code as a natural number! There cannot be more Java program source files than there are natural numbers.

QUESTION 2: How many mathematical functions from non-negative integer inputs to bool outputs are there? (Don't worry about 32-bit vs. 64-bit vs. bignums: we're talking about mathematics.)

Think, then click!

Each such function returns true or false for any given non-negative integer. In effect, it is defining a specific set of numbers. There are as many such mathematical functions as there are sets of natural numbers.

Try as you might, it is impossible for any programming language to express every predicate on numbers. Any given language cannot express some mathematical concepts—indeed, the overwhelming majority of them!

It's not easily fixed.

You might be tempted to say: let's just define many different programming languages. But think: how do we define a new programming language? Is each definition finite? Then consider the version of Hilbert's hotel where a new guest arrives for every natural number.

But can't programs only work with finite data?

Moreover, you might know that no finite subsets of the naturals have cardinality larger than the naturals themselves. (This is true.) Since computers only work with finite data, doesn't that make this all a non-issue? Unfortunately, no. We write programs that represent infinite sets all the time. Here's one written in Python: lambda x: x % 2 == 0.

Programs simply have unavoidable limits to their expressive power. But maybe all the inexpressible things don't matter, because nobody actually needs or cares about them? That would be comforting. But it's not true, either.

AI can't escape this problem.

There's a lot of speculation about whether artificial intelligence will eventually exceed our current capabilities. Personally, I think that the answer is "yes" in many ways—but not here. We're talking about a limit baked into mathematics itself. None of the popular-science speculation that I've seen (e.g., quantum computing) escapes this constraint. We'd need to somehow "move the barber out of town".

Another Story: Halting¶

It's the early 1900's. Hilbert (him again) and many others are wondering whether the study of mathematics can be mechanized. Can we write a procedure that finds proofs for us? More precisely (using today's terms) is it possible to write a program that accepts a conjecture and: - always terminates in finite time; - returns a proof if the conjecture holds; - returns a counterexample if the conjecture doesn't hold. (Does this sound familiar?)

Then, in the 1930's, Kurt Gödel, Alonzo Church, Alan Turing, and others showed that the answer was no—at least, not completely. Different courses cover this in different ways (you can even use diagonalization to prove this!). But here's the most common one:

Write for me a program h(f, v) that accepts two arguments:

* another program (f); and

* an input to that program (v).

It must:

* always terminate;

* return true IFF f(v) terminates in finite time; and

* return false IFF f(v) does not terminate in finite time.

Suppose h exists, and can be embodied in whatever language we're using. Now we can write this other program:

Why is this program a problem?

For your convenience, I've added comments to h:

Argh! No matter how clever we are, h cannot exist. This is called the halting problem: it is impossible to write a program that (in finite time, without error) says whether another program terminates on a given input.

What are consequences for us? It's OK to be philosophical or uncertain.

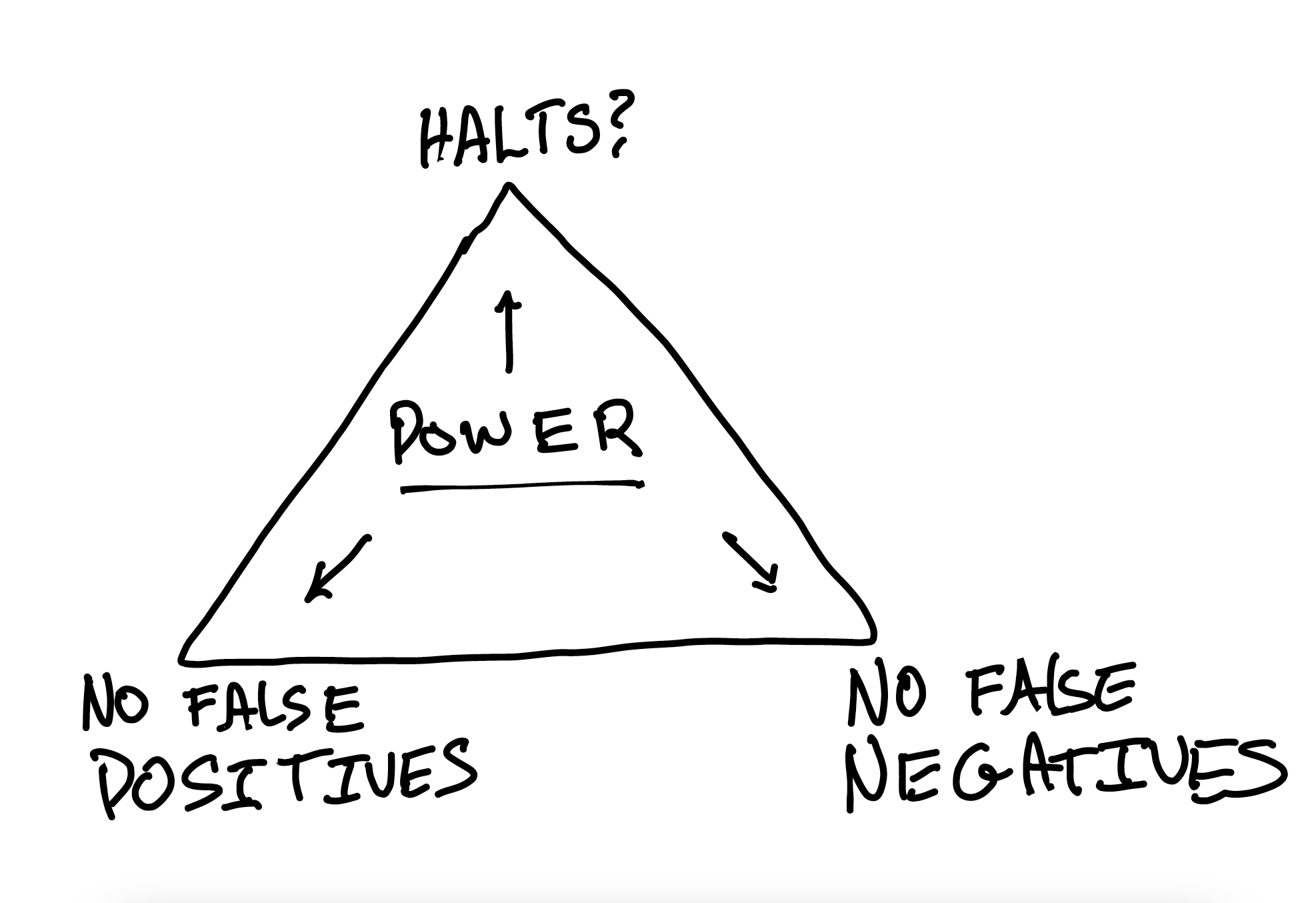

It turns out that it's impossible to write an always-terminating, always-correct oracle for an arbitrary program's behavior—if the two languages have the same expressive power. This leads to what I call the Triangle of Existential Despair, inspired by the maxim: "Fast, cheap, and good: pick two". If we want to analyze a program (in the general case, with no restrictions on the language), we have to give something up: termination, soundness, or completeness.

This is a major reason why domain-specific languages are so useful. We know how to analyze regular expressions, but if they were encoded as arbitrary programs, analyzing them would be much harder unless the program was structured a specific way.

What Does This Have To Do With SMT?¶

Church and Turing (building on Gödel's work) proved that number theory is undecidable: if you've got the natural numbers, along with multiplication and addition, it is impossible to write an algorithm that answers arbitrary questions about number theory in an always correct way, in finite time. So we should expect a theory-solver for integer arithmetic to be incomplete.

There's a lot more we could talk about. The area is technically deep, and beyond the scope of this book. But here are two more true statements about the world:

- If we give up multiplication, and just keep addition, an algorithm exists and we know it.

- If we keep both addition and multiplication, but switch to real numbers instead of integers, an algorithm exists and we know it. (It's slow, but it exists and always terminates.)